Description

Develop sign language application for the hearing impaired, sign language can be very important, as they can easily communicate with those who do not understand. Our project is for normal, deaf or dumb people using sign language. It aims to take the basic step towards closing the communication gap. For project files, visit GitHub or Contact.

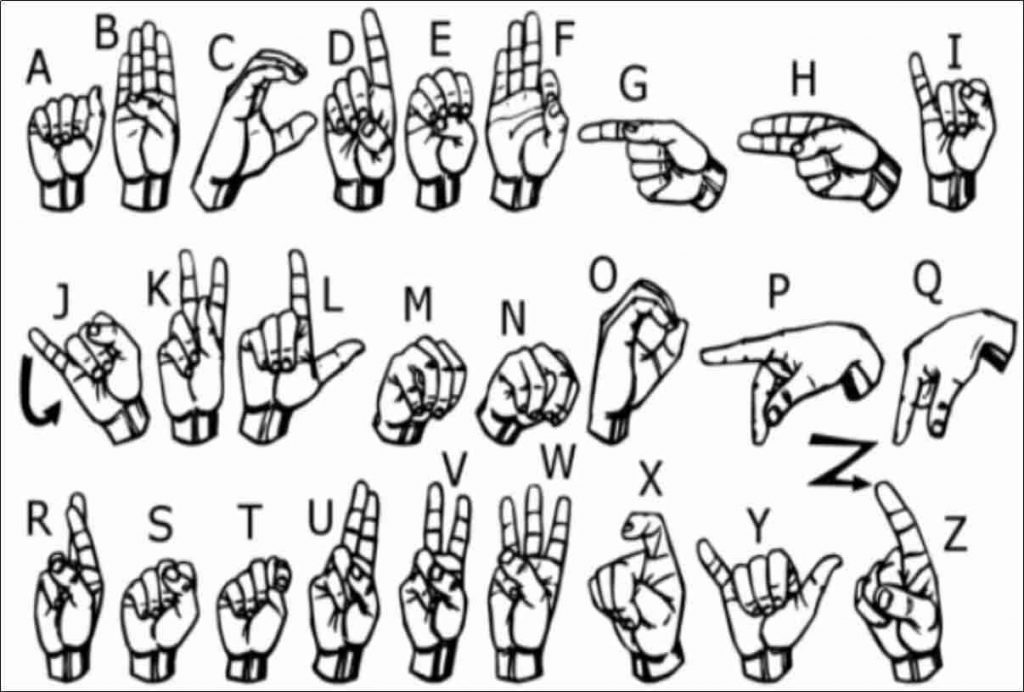

The main focus of this study is the sign language movements from video series. is to create a vision based system to define. A vision-based The reason for choosing a system is that it is simpler and more it is about providing an intuitive way of communication. This report we covered 46 different gestures It was obtained.

CNN

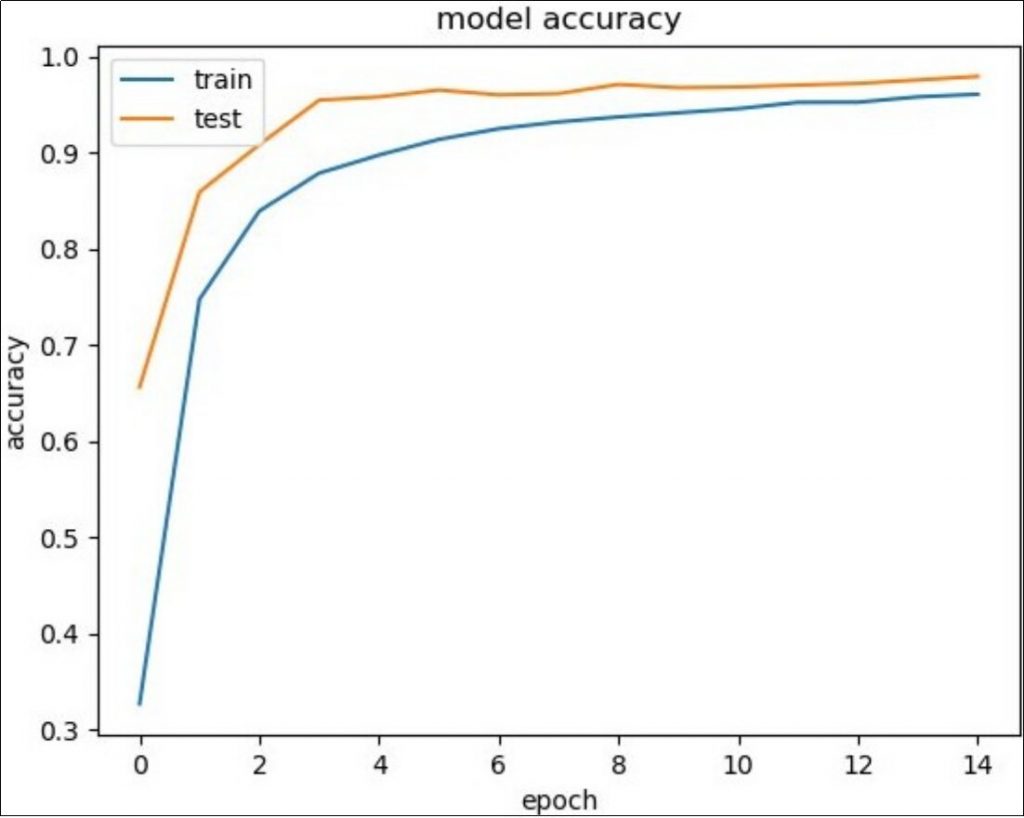

Video sequences contain both temporal and spatial features. That’s why two different models to train both temporal and spatial features We use. The model focuses on the spatial properties of video sequences. The Beginner model, a deep CNN (evolutionary neural network) to train. We use. CNN, frames derived from video sequences of train data Trained on. Train the model on temporal characteristics We used (repetitive neural network). Trained CNN model, one for each video for individual frames to obtain array prediction or pool layer output used to make predictions. Now, this forecast or pool layer was given to training their output on temporal characteristics. Used the data set consists of American Sign Language Movements and 106500 images belong to 26 categories. CNN estimates as input for 97% accuracy was obtained using.

The system consists of four main modules: Data Collection, Preprocessing, Feature Extraction, and Classification. The preprocessing phase includes Skin Filtering and histogram matching; eigenvector based feature extraction and eigenvalue weighted Euclid distance-based classification technique is used. In this article, where a 97% recognition rate is obtained for there are 26 different letters.

Introduction

The movement of any body part, such as the face, is a form of hand gesture. Here We use image processing and computer vision for gesture recognition. Movement recognition enables the computer to understand human actions and also the computer acts as an interpreter between man and man. This is mechanical to people naturally with computers without any physical contact with devices that can provide the potential to interact. Gestures, sign language performed by deaf and dumb communities. Its communication is not possible while the community is broadcasting audio or writing and it is difficult to write, but there is a possibility of seeing. Then sign language people are the only way to exchange information between. Normally speaking sign language used by anyone when they don’t want, but deaf and dumb this is the only way of communication for communities. Sign language is the same as the spoken language means.

Vision Based

Computer vision hand or finger information in vision-based methods is the input device used to observe. Vision-Based methods require only one camera so people and realize a natural interaction between computers. These systems are software and/or defining the machine vision systems applied in the hardware that tends to complement the biological vision. This is real-time performance These systems do not change in the background, are insensitive to light, and It poses a difficult problem since it should be independent of the camera. Also, such systems include accuracy and robustness. It should be optimized to meet the requirements. Using the camera capture the image and then extract some features. These properties as an input in a classification algorithm for classification used.

Convolution

The first layers that receive an input signal are called a convolution filter. Convolution, the network is trying to tag the input signal based on what it has learned in the past is a process. The input signal to previous hand images he has seen before if similar, the “hand” reference signal will interfere with the input signal or will be curved. The resulting output signal is then to the next layer. Transmitted. Convolution has the property of being a translation invariance. Intuitively, that each convolution filter represents a related feature and CNN Learns the algorithm’s resulting reference content. Output signal power does not depend on where the features are located, only features available depend on whether or not. Therefore, an elf stands in different positions and may have different skin colors but the CNN algorithm still can recognize.

Sub-Sampling

The inputs from the convolution layer make the filters noise and can be “flattened” to reduce its sensitivity to variations. This smoothing is called subsampling and averages over a sample of the signal can be obtained by taking the maximum value. Sub-sampling Examples of methods (for image signals) the size of the image to make it smaller (we took our images in 64×64 size) or red, green, This includes reducing the color contrast in blue (RGB) channels

Loss During Training

When training, there is an additional layer called the lost layer. It layer, whether the neural network inputs are correctly defined and if not, feedback on how far their estimates are It provides. This is to strengthen the right concepts while training the neural network helps to guide. This is always the last layer during training.

Data Set

Two data sets to train the model on temporal and spatial features We use. Educate to train features in both data sets It differs according to the inputs given to CNN. Then we have two By combining the dataset, we achieved greater accuracy.

From the American Sign Language Movements used in both data sets and approximately 105600 images belong to 26 movement categories. There are approximately 4160 images per category.

First Approach

In this approach, individual squares using our starting model, CNN, We obtained spatial properties for. Each image is then individually represented by a series of predictions by CNN for each of the frames It was.

Removing Frames & Background

It is divided into a series of frames into the video movements it sees instantly. Next, Everything except hands, frames for removing all noise in the image Processed. The final image is relevant to enable the model to learn color-specific Plays that are made in skin color.

Result

Hand gestures, many potentials in the field of human-computer interaction A powerful way for human communication with the app. Vision-based hand gesture many proven advantages of recognition techniques compared to traditional devices has. However recognizing hand movements is a difficult problem and current study To reach the results needed in the field of recognition of sign language movements It is only a small contribution.

We achieved 97% accuracy so classifying Sign Language Gestures shows that it can be used successfully. We want to expand our work to get to know the sign language movements more accurately. This method for individual movements, at the sentence level, can also be extended for sign language.